Imagine you are visiting an unknown European city. You arrive at the central train station toward night fall. Now you must find your hotel. You feel uncomfortable asking for directions from random people on the street. You are not so skilled at using maps, and in any case you don't want to be bothered looking down or having to read street signs as you navigate. You are lucky to have an Android phone with the SpaceBook app that speaks directions into you ear as you navigate your way to your hotel. With your eyes free and while pulling your suitcase, you listen as it guides the way: ``OK continue another 100 meters, and turn right into the alleyway immediately after passing a Tesco on your right.''...

Now after a good night's sleep, you want to explore the city, learning about its history, attractions and other features. As you walk down the street from the hotel, a stunning neoclassical building on your left comes into view. Without pushing any buttons, you simply say ``SpaceBook! What is the building on my left?''. SpaceBook answers, ``That is the National Art Museum. It houses ... (you listen for 20 seconds to the basic introduction)''. Then you interrupt, ``when does it close today?''. SpaceBook answers, ``It closes at 4 o'clock today.'' You continue walking. You have plenty of time (and now enhanced capability) to explore the city on foot.

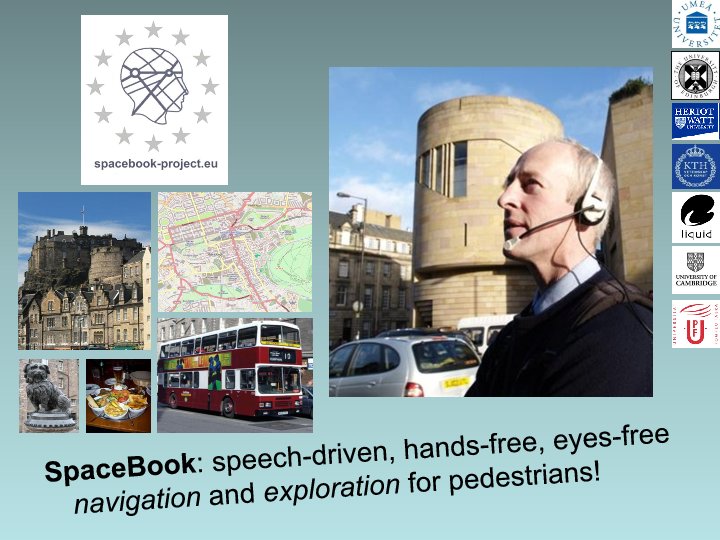

The SpaceBook project has pioneered the use of speech-based dialogue to support pedestrian navigation and exploration of urban environments. While there have been many new approaches to this problem, ranging from Google glasses, to more routine multi-modal touch screens, to even haptic interfaces, to our knowledge SpaceBook is the first system to rely solely on natural language speech and text-to-speech responses to support real-time, in situ navigation and exploration of the urban environment. While there has been recent industrial interest in voice-based, mobile city information systems (e.g. Apple's Siri system), SpaceBook is unique in pioneering the modeling of the pedestrian's field of view to provide situated speech-based dialogue to support both navigation and exploration.

The primary hypothesis of the SpaceBook project is that such a speech-only interface is feasible and can be used reliably by a wide range of subjects. A secondary hypothesis is that such a set-up could also be rated as more enjoyable and informative than the alternatives. To test both of these hypotheses the SpaceBook team built a series of prototype systems which culminated in a single system that was tested with subjects on the streets of Edinburgh. The results of this evaluation (and others), lend support to our hypotheses that such systems can be made reliable and enjoyable. This suggests that the technology laying behind SpaceBook could and should be further developed and refined toward large scale development, deployment and use.

For more detail, see our Summary of SpaceBook project results which links to project generated publications .

Spacebook received funding from the Framework 7 Programme: Information and Communication Technologies - Challenge 2: Cognitive Systems, Interaction, Robotics.